Best Practices for SaaS on AWS

Amazon Web Services (AWS) has been an advocate for robust cloud security since it launched in 2006. When you use AWS as your cloud infrastructure provider, you the customer of AWS employ a shared responsibility model that distributes security roles between the provider and the customer. As a public cloud vendor, AWS owns the infrastructure, physical network and hypervisor and you, the customer, own the workload applications, virtual network, access to the environment/account and the data.

The SaaS Startup Kit is a set of libraries in Go and boilerplate Golang code for building scalable software-as-a-service (SaaS) applications. We then have an open-source set of GO DevOps tools for building a continuous deployment pipeline for GitLab CI/CD with serverless infrastructure on AWS.

Since we primarily use AWS for projects leveraging the SaaS Startup Kit and corresponding GO DevOps tools, this blog post outlines the best practices you can implement to ensure data security and auditability.

AWS Shared Responsibility Model

Like most cloud providers, Amazon operates under a shared responsibility model. Amazon takes responsibility for the security of its infrastructure, and has made platform security a priority in order to protect customers’ critical information and applications. Amazon detects fraud and abuse, and responds to incidents by notifying customers.

However, we are responsible for ensuring our AWS environment is configured securely, data is not shared with someone it shouldn’t be shared with inside or outside our organization, able to identify when a user misuses AWS, and enforcing compliance and governance policies.

- Amazon’s responsibility – Since it has little control over how AWS is used by us (its customers), Amazon has focused on the security of AWS infrastructure, including protecting its computing, storage, networking, and database services against intrusions. Amazon is responsible for the security of the software, hardware, and the physical facilities that host AWS services. Amazon also takes responsibility for the security configuration of its managed services such as Amazon RDS, Elastic MapReduce, S3, etc.

- Customer’s responsibility – As customers of AWS, we are responsible for secure usage of AWS services that are considered unmanaged. For example, while Amazon has built several layers of security features to prevent unauthorized access to AWS including multi-factor authentication, it is the responsibility of the customer to make sure multifactor authentication is turned on for users, particularly for those with the most extensive IAM permissions in AWS.

This diagram outlines AWS responsibilities for security vs our responsibilities as their customer:

AWS Trusted Advisor Security

AWS Trusted Advisor is a service that provides a real-time review of your AWS accounts and offers guidance on how to optimize resources to reduce cost, increase performance, expand reliability, and improve security.

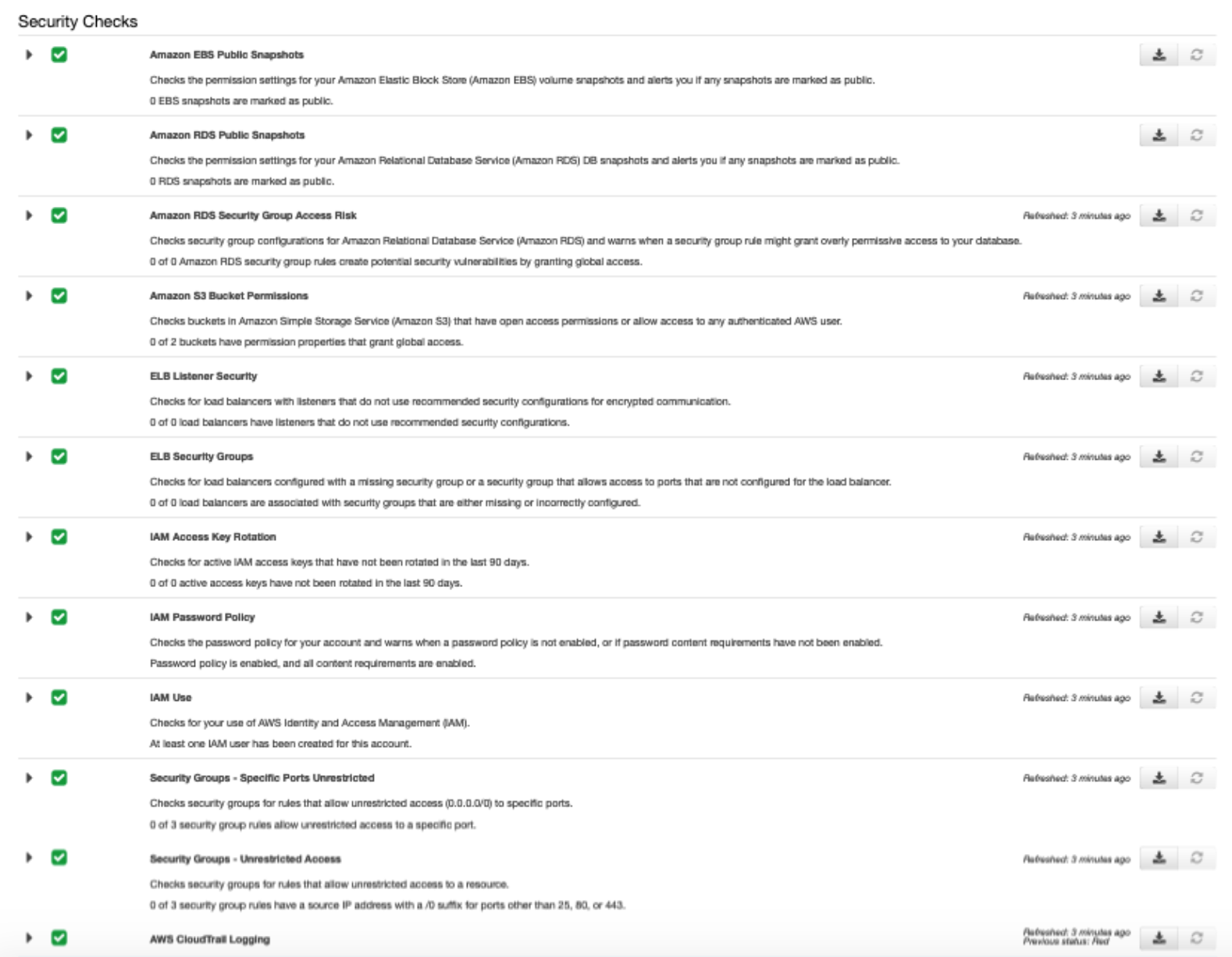

The “Security” section of AWS Trusted Advisor should be reviewed by you or another IT executive on a regular basis to evaluate the health of your AWS account(s). Currently, there are multiple security specific checks that occur — from IAM access keys that haven’t been rotated to insecure security groups. Trusted Advisor is a tool to help us more easily perform a weekly review of your AWS accounts.

Below is a Trusted Advisor report of security checks for an account that has a project that leverages the SaaS Startup Kit. These security checks were generated by scanning our infrastructure and compares it with Amazon’s own best practices. As you can see in the report below, we pass all of these security checks. Our open-source set of GO DevOps tools is a huge help.

Best Practices for SaaS in GO on AWS

One of the most important things you can do as a customer of AWS to ensure the security of your resources is to maintain careful control over who has access to them. This is especially true since we use programmatic access. Programmatic access allows us to invoke actions on our AWS resources through our custom applications for DevOps and by the applications themselves.

To maintain a meticulous security posture across our cloud environments and abide by the AWS Shared Responsibility Model, organizations like yours and ours must be disciplined about applying cloud security best practices and accompany efforts with automated, continuous monitoring.

Audibility with AWS CloudTrail

AWS CloudTrail is that tool which allows you to record API logs for security analysis, compliance auditing and change tracking. With it, one can create trails of breadcrumbs which lead back to the source of any changes made to any one of our AWS environments. This capability allows organizations to continuously monitor activities in AWS for compliance auditing and post-incident forensic investigations. To ensure CloudTrial provides effective audibility, you must implement these tight security configurations:

Enable CloudTrail across all geographic regions and AWS services to prevent activity monitoring gaps.

- CloudTrial needs to be enabled for any new deployment regions.

- Turn on CloudTrail log file validation so that any changes made to the log file itself after it has been delivered to the S3 bucket is trackable to ensure log file integrity.

- Enable access logging for CloudTrail S3 bucket so that we can track access requests and identify potentially unauthorized or unwarranted access attempts.

- Turn on multi-factor authentication (MFA) to delete CloudTrail S3 buckets, and encrypt all CloudTrail log files in flight and at rest.

- Ensure access logging is enabled on the CloudTrail S3 bucket so we can track access requests as well as maintain a record for those who have access and the frequency with which they are using it.

Follow Best Practices for Identity and Access Management (IAM)

IAM is an AWS service that provides user provisioning and access control capabilities for AWS users. AWS administrators can use IAM to create and manage AWS users and groups and apply granular permission rules to users and groups of users to limit access to AWS APIs and resources. To make the most of IAM, you need to follow these best practices:

- When creating IAM policies, ensure that they are attached to groups or roles rather than individual users to minimize the risk of an individual user getting excessive and unnecessary permissions or privileges by accident.

- Provision access to a resource using IAM roles instead of providing an individual set of credentials for access to ensure that misplaced or compromised credentials do not lead to unauthorized access to the resource.

- Ensure IAM users are given minimal access privileges to AWS resources that still allows them to fulfill their job responsibilities.

- As a last line of defense against a compromised account, ensure all IAM users have multi-factor authentication activated for their individual accounts, and limit the number of IAM users with administrative privileges.

- Only allow IAM access keys in AWS accounts for development environments and rotate access keys regularly.

- Enforce a strong password policy requiring a minimum of 14 characters containing at least one number, one upper case letter, and one symbol. Apply a password reset policy that prevents users from using a password they may have used in their last 24 password resets. This includes a password expiration policy.

Limited Administrative Access of IAM users

Your CTO and other carefully selected IT executives should administrator access to your AWS account(s). The administrator access to these users should align with permission grants for the appropriate level of authority.

For one of our newer startups, only myself, the CTO, has an IAM user with administrator access. By closely auditing access levels and granting a limited number of users access, we are optimizing our security posture.

Least-Privilege Access for IAM Users

Unrestricted or overly permissive user accounts increase the risk and damage of an external or internal threat. Administrators for your AWS account(s) need limit a user’s permissions to a level where they can only do what’s necessary to accomplish their job duties.

With one of our newer startups, we have three different AWS accounts for specific environments: development, staging and production. Accordingly, the AWS accounts for production and staging environments is only granted to select employees who need to execute one of their job duties. Permissions are granted to IAM users on a case by case basis with privileges that match their request. Access for IAM Users is removed upon completion. While all our software engineers should have access to our development environment, we are still careful about who has access to this account.

Use IAM Roles for Amazon Services

Using advanced technology such as IAM helps organizations like yours and ours eliminate the risks of security compromises. With roles defined, users with lower levels of access can conduct tasks in various AWS services, without the need to grant an extreme level of access or too broad of access. This approach allows specific access to AWS services and resources, reducing the possible attack surface area available to bad actors.

You should use IAM roles to provide credentials to any of your services (websites, applications or golang bootstrap web apps). When using IAM roles, these credentials are dynamically created by AWS and automatically rotated.

No AWS access keys should be issued for any of your production or staging environments. IAM roles should be required to be used for all services in all production or staging environments to prevent AWS account credentials from being shared or accidently leaked.

Least-Privilege Access for IAM Roles

It is also best practice to add conditions to the policy for IAM Roles that define access. Since we all must operate using the least-privilege access, it is important that in the policy for an IAM role furthers restrict access to only the specific resources and actions required.

All AWS permissions required for a service (website or application) should be granted by resource and action to that is limited to only the specific privileges required for the web application including your GO lang bootstrap web apps.

Root IAM User Protected

The AWS root user that was created when you initially signed up with AWS has unrestricted access to all AWS resources. There’s no way to limit permissions on a root account. For this reason, AWS always recommends that you should restrict use of this root IAM user and that you should not generate access keys for this root user. Your root IAM user for an account should always be protected by multi-factor authentication to protect against unauthorized logins.

In our startups, myself, the CTO is the only individual with access to the root IAM user for an account. My use of it is very limited and on an as-needed basis. The person on your team with access to this root IAM should use it very limited and only on an as-needed basis as well!

Instead of using the root IAM user, you should create individual AWS IAM users - even for yourself if you are the CTO. Then you should grant each user permissions based on the principle of least privilege: granting them only the permissions required to perform their assigned tasks. The IAM user for our CTO is the only user with administrative access.

Rotate AWS API Keys Regularly

With AWS, services running outside of our environment require keys to help keep our environments secure. Despite roles removing the need to manage keys for staging and production environments, API keys may still be used for a development (dev) and testing environments. Corresponding API keys for dev and testing environments must be rotated regularly. By rotating keys regularly, we can control the time for which a key is considered valid, limiting the negative impact to the business if a compromise occurs.

Accordingly, we rotate API Keys every 180 days and have calendar invites to ensure API key rotation is completed timely.

Strong Passwords

All our AWS IAM users are required to use a strong password (enforced by password policy) and are required to have multi-factor authentication enabled.

Multi-Factor Authentication

Businesses like yours and ours need more than the single layer of protection provided by usernames and passwords, which can’t be cracked, stolen or shared. Keep in mind that AWS IAM controls may provide access to not just the infrastructure but the applications installed and the data being used. By implementing multi-factor authentication (MFA) each login requires that a code be provided in addition to the password.

All your AWS accounts should require that IAM users enable multi-factor authentication. Details of all IAM users are reviewed to ensure each has MFA configured and enabled consistently.

AWS Secrets Manager

While least privilege access and temporary credentials are important, it is equally important that your users are managing their credentials properly — from rotation to storage. We use AWS Secrets Manager to centrally manage the lifecycle of secrets used in our organization, including rotation, audits, and access control. Most importantly this service allows you to rotate secrets automatically.

Secrets Manager also offers built-in integration for MySQL, PostgreSQL, and Amazon Aurora on Amazon RDS. We use these Secrets Manager integrations to manage the secure authentication of our services with corresponding databases.

AWS Fargate

AWS Fargate is a serverless compute engine for our containers on Elastic Container Service (ECS). Fargate makes it easy for us to focus on building our applications including websites. Fargate removes the need to provision and manage servers, letting us specify and pay for resources per application, and improves security through application isolation by design.

Individual ECS tasks each run in their own dedicated kernel runtime environment and do not share CPU, memory, storage, or network resources with other tasks and pods. This ensures workload isolation and improved security for each task.

Since Amazon manages the underlying IT infrastructure for Fargate, more of the security responsibility is shifted from us to Amazon. For applications running with Fargate, we only have to focus on security of the application and its container.

And when you use the SaaS Startup Kit and corresponding GO DevOps tools that leverage Fargate, you can take advantage of this paradigm shift of additional responsibility of security from you to Amazon. Therefore, we recommend that appropriate applications should be built and deployed with Fargate.

Auto Scaling to dampen DDoS effects

Amazon Auto Scaling helps to ensure that you have ample resources available to handle the load of your applications. When Amazon Auto Scaling is enabled, it ensures that the resources for your applications never go below a specified capacity.

Services deployed to AWS Fargate should implement an auto scaling policy to ensure application load is being handled and help mitigate any DDoS effects. During a DDoS, the load of your application is increased substantially. Instead of having to manually scale resources, an auto scaling policy configured in Fargate can grow resources during a DDoS and ensure the application continues to operate under the increased load.

Follow Best Practices for AWS Database and Data Storage Services

Amazon offers several database services to its customers, including Amazon RDS (relational DB), Aurora (MySQL relational DB), DynamoDB (NoSQL DB), Redshift (petabyte-scale data warehouse), and ElastiCache (in-memory cache). Amazon also provides data storage services with their Elastic Block Store (EBS) and S3 services. While we generally utilize Aurora, ElastiCache and S3 services for projects that leverage the SaaS Startup Kit, it is important not to forget to follow these best practices for any new database or data storage service implemented.

- Ensure that no S3 Buckets are publicly readable/writeable.

- Encrypt data stored in EBS as an added layer of security.

- Encrypt Amazon RDS as an added layer of security.

- Restrict access to RDS instances to decrease the risk of malicious activities such as brute force attacks, SQL injections, or DoS attacks.

No Data Transfer Between Environments

For your development and staging (testing) environments, by necessity, do not have the same security controls as your production environments. If you move sensitive data such as client end user data from production into another environment, you open the data up to a much wider group of users. The result is a massively increased risk of a breach, whether malicious or inadvertent, and of a hefty fine from a regulator. Keeping client data including their end user data secure is of extreme importance.

No raw data should be copied or transferred out of the designated environment for any reason. No raw data should be copied from production into any other environment, not even for debugging and testing. Only securely anonymized data can be transferred between environments using transfer processes approved by the person that has administrative access like your CTO. And if you have a Security Team, they should be included in the decision to grant the request of only securely anonymized data.

No Public Access Policy for AWS S3

You must ensure that Amazon S3 Block Public Access feature is enabled at your AWS account level to restrict public access from all of your S3 buckets, including those that you create in the future. This feature has the ability to override existing policies and permissions in order to block S3 public access and to make sure that this type of access is not granted to newly created buckets and objects.

The only reason objects on S3 should be publicly accessible is when static asset files need to be served to end users. For example, we have a GOlang bootstrap web app that allows users to interact with it via a web browser. That web application may have static files like logos or images files that need to be delivered and displayed to the end user. These static files are usually served in connection with AWS CloudFront or another Content Delivery Network (CDN). While an entire bucket should never be made readable, a specific folder in a bucket or subset of objects can be made only readable in order to allow those files to be served publicly.

Involve Security Team throughout Development

If your organization is large enough to have a Security Team, then you should invite them to bring their own application testing tools and methodologies to DevOps including when pushing production code without slowing down the development lifecycle. When your Security Team is included in the development lifecycle, they can also ensure that the end users of your websites and applications are using them in a secure manner.

Summary of AWS Best Practices for GOlang SaaS Apps

While there are lots of blog articles out there about general AWS best practices, we created this document to provide further insight on the specific use case of using AWS for software-as-a-service, and specifically, software that leverages our open-source SaaS Startup Kit and corresponding GO DevOps tools.

The AWS Trusted Advisor does provide several checks to ensure best practices for the security of your AWS accounts and you should follow them. In addition, hopefully this post provides further insight into the best practices for the security of your cloud environments hosted with AWS.

The foremost requirement when it comes to ensuring data security and a secure infrastructure is complete visibility. The Trusted Advisor and best practices outlined above make sure you are doing what it takes to keep your data and infrastructure risk-free. We created this summary of AWS best practices to help show the depth and range of preventative security measures you should follow to keep your AWS accounts and client data secure:

- All AWS IAM users should use a strong password (enforced by password policy) and are required to have multi-factor authentication enabled.

- CloudTrail needs to be enabled for all AWS regions and services to provide auditability.

- Enhanced configuration of CloudTrial to prevent activity monitoring gaps.

- Provision access to resources for applications using IAM roles instead of providing an individual set of credentials to ensure that misplaced or compromised credentials don’t lead to unauthorized access to the resource.

- No AWS access keys will be issued for AWS production or staging environments. IAM roles are required.

- Ensure IAM users are given minimal access privileges to AWS resources that still allows them to fulfill their job responsibilities.

- User accessing an environment in the GovCloud must be a citizen or national of the United States.

- All AWS permissions required for applications should be granted by resource and action that is limited to only the specific privileges required for the web application.

- Applications and deployment processes should be designed to use AWS Secret Manager to enable effective management of credentials.

- Applications should only be deployed via AWS Fargate and therefore, the security for the underlying IT infrastructure is handled by AWS.

- Services deployed to AWS Fargate should implement an auto scaling policy to ensure application load is being handled, which will help mitigate any DDoS effects.

- No raw data should be copied or transferred out of the designated environment for any reason.

- When possible, encrypt data depending on AWS data storage services utilized.

- AWS S3 buckets should never have a public access policy. New S3 buckets should always block public objects unless specifically serving static asset files.

- Regularly verify SSL certificates to ensure certificate parameters are as expected and not misconfiguration to expose any potential vulnerabilities.

- Involve our Security Team through the software development life-cycle.

If you have any feedback on this document, let us know via twins@geeksaccelerator.com.

As a software engineers with 21yrs experience with a passion for Golang and serverless infrastructure with AWS, inspired by people that push the limits of what is humanly possible. He is one of the core contributors to the SaaS Startup Kit and GO DevOps tools projects.

As a software engineers with 21yrs experience with a passion for Golang and serverless infrastructure with AWS, inspired by people that push the limits of what is humanly possible. He is one of the core contributors to the SaaS Startup Kit and GO DevOps tools projects.